WEBINAR

Supercharging Your Promotional and Mix Strategy with RAI Intelligent Assistants.

How to Grow, Deliver, and Enhance Capabilities Faster (Even with Imperfect Data) Using Cognitive AI-Powered Copilots.

June 14, 2023 | 03:00 PM CET

Inflation increases directly promotional costs. How to manage each promotional folder with a clear ROI lense, combining brand strategy, market share increase and profitability at the same time? Artificial Intelligence leveraged by Intelligent Assistants step change your promo strategy through alerting, simulations and recommendations.

But Retail is detail. Indeed, once your promo strategy is designed, what would be the impact on your mix management? Do we promote the right pack with the right size in the right channel for the right retailer with the right profitability?

WHO IS THIS WEBINAR FOR?

- Revenue management teams

- Sales & Category management teams

- Finance teams

- Anyone interested in leveraging Intelligent Assistant for Promotion & Mix strategy

WHAT YOU WILL LEARN?

- What AI is bringing in the Revenue Management Strategy ie. Promo & mix?

- How Intelligent Assistants can leverage your Promo & Mix strategy?

- How to delegate alerts vs competition to Intelligent Assistant?

- How Intelligent Assistants will accelerate adoption for all teams?

Q&A from the Webinar:

Cost is one of the inputs when it comes to return on investment, as there are two sides (the cost and benefit). So, the benefit side is the uplift, coming from an estimated base. The cost side, which would probably be the thing you are the most interested in, can come from two or even three places. One is the discount applied, as we can detect if there was any applied. Additionally, we can introduce default cost to the promo mechanics which mirrors yours, so that you can validate if given same level of cost your competitors promo activities are less/more efficient. Last element is the average industry cost which can serve as default for analysis as well.

There are a number of input parameters which could go into the ROI calculation, and, to some extent, we could customize it on a client or even on a channel chain combination basis.

Yes, it is possible. The platform itself is a multiple puzzles ecosystem which simply means you can plug and play different elements of it. For example, if you have a specific module that already handles the price pack architecture, you can plug it into the portal and have it wired to the rest of the capabilities.

If you want to use your analytical capabilities from other ecosystems you’ve already developed in the past, it can be wired, as well.

If you want to use your own algorithm that is, for example, used for calculating price elasticity, because, for some reason, you think that it’s better explaining how your business works versus what we have on the platform, it is also possible.

That kind of plug and play bricks are what the platform consists of.

This question can be extended to the eCommerce business as well, and there are a couple of elements to answer about it. On this webinar, we have not showcased the capability to embark on and integrate external data sources coming from third parties, and the capability to scrap online data (pricing and product metadata included).

We are already doing this for couple of the clients, and this means it is possible to have those (your own and competition) prices available on the platform, which are unified using our AI-driven algorithms and matched completely with internal data sets from a hierarchy and comparability perspective. As a result, we can help you to run a proper pricing strategy for your products, regardless if those are physical products in digital space or fully digital products. It’s really a matter of defining the right competition, our product handles the rest.

Yes, you can integrate those elements.

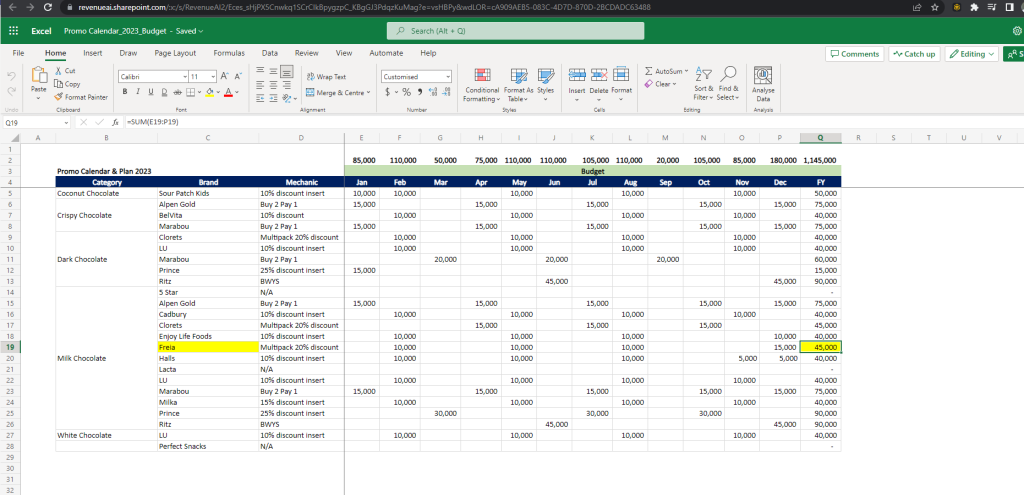

What you can see on the screen below is the example of SharePoint integration:

It is our internal SharePoint integration, for the demo purposes, but it can also be an external SharePoint (i.e. your own), or your knowledge base (i.e. Confluence or wiki-like repository, pdf library). As a matter of fact, it can be any external knowledge base.

Additionally, it can also be a third-party data provider (with some of them we already collaborate and we can enable the data from them for your usage) when it comes to, for example, cost of goods calculations (i.e. sugar, cocoa, oil, etc. prices), down to the point where we can integrate your third-party data set that you have either acquired through the partnership with your retailers, or any other externally sourced.

Any price change can be detected. There are multiple ways for the data for the prices to get into the system. One of them is to scrape it from an eComm site. The other one is from past companies’ internal records and is the most widely used case we have.

In both cases, we establish what we call a bench price (the standard price), and whatever deviation or change the model experience, it can decide if it is a one-off time, like a temporary discount or a temporary raise. It can go down into individual stores or individual website levels even.

If it is not a one-off if there are different services included, for example, different time periods for software prices. In this case, it is completely normal to have a different pricing plan for a month-by-month subscription, than a yearly subscription. Those count, from this perspective, as separate products, separate services, and each of them would have their own bench price, their own discount or raise level. Same applies for identifying promo mechanics in eComm (i.e. BOGOF and similar).

It depends. If you are interested in having all the algorithms running simulations through different business scenarios generating recommendations, then you will need historical data. But, if you are interested in several capabilities that do not require historical data, like alerting, then this is the point where it is not necessary.

If the question nature is coming from the fact that you don’t have historical data and you need to build it up, and you are a high frequency ecosystem, which is, for example, eComm digital, where the data granularity can go to minutes. In this case, depending on how frequently the data is captured, the data set can be quickly built up in order to provide the required simulation and data modeling base.

Yes, it is calculated within the tool. The whole Revenue.AI ecosystem is built on a data processing framework that captures the historical calculations and also feeds the simulator with values. The whole idea of AI is to detect historical patterns and understand what a change in price or how a change in some product attribute, for example, translates to volume sales and overall total value sales so that future behavior can be simulated.

Before it is possible to run any simulations, some kind of history would be great. The longer the history, the more accurate and with more confidence levels the simulator tool outputs are. The longer the data history is available, the better.

Additionally, this is valid not only for a single product elasticity, but for cross-product prices elasticities, as well. We also have the ability to calculate and simulate the switching matrix directly from the data, so you don’t need to purchase it from data providers, if you’re using our platform. Alternatively, if you have elasticities calculated within your own ecosystem you can run comparison with the ones produced in our product and see what brings better, more accurate results and may decide to plug-in some elements of our ecosystem into your internal framework.

It is cloud based platform and yes, It is available worldwide. It is a global tool, and yes, we work with Europe, Asia, North and Latin America, Africa, etc. Therefore, it can be used across the globe. It can be implemented in one country and scaled to a global level if this is the company strategy.

So how would a supermarket use this real-time adjustment of executed promotion be able to adjust, looking at the performance of a Promo with the same product at a different supermarket?

And with that mentioned, how not to go into a downward spiral, if both AI generated alerts and suggestions will focus on the steps taken by the competitor (again and again)?

It’s not only B2B, but also B2C. Thus, the insights could be translated into not only the typical Unilever, or any other FMCG company that works with distributors or other large companies. Therefore, it can be used by retailers who have access to the direct data feeds and act accordingly on what is happening to their products and see the results.

In fact, for B2C companies is much easier to use our ecosystem as you have more datasets that can enrich analysis and immediately be tested on live ecosystem for quick adjustments.

What was previously mentioned in terms of the eComm channel can be used by an FMCG company such as Unilever, to gather data from the B2C perspective. If you are scraping this data properly, you can see from different websites what is happening. For example, you can see what is happening in France Carrefour and France Auchan for your online presence products versus your products and whether this is something that is happening constantly or there are some differences on this one, and you can get alerts on them.

When it comes to the downward spiral, this is the question of finding a steady state. If you are currently involved in an action – response to your competitors in a physical world, it is a bit different. In the digital world, it will happen much faster, assuming that all the competitors are using the same tools, so this will be most likely inevitable. In fact it is already the case in B2C digital world where prices of products converge into steady state and action-response is triggered automatically (i.e. home appliances, electronics).

But the beauty of it is that the steady state will be found, and this is something that the AI will do, and by those recommendations, there will be steady states. And, your value contribution comes into where your brain power can be deployed – you will come up with new ideas that will simply change what is happening on the market because you will have time to do so.

Moreover, the assumption that all the competitors will be using the same tools and will have the same algorithms, that perform in the same way, is something that will not happen over the next couple of years.

This depends on the scale. The pilot could take anything from a couple of days to a couple of weeks. This is the typical implementation length.

When it comes to the number of people that are needed, we need at least one person who is willing to start and has knowledge of the company data/processes. All the beauty of this is the fact that you can scale your capabilities 10x or 100x with the help of those tools and simply be able to provide value for your business so that it grows immediately, and you can start accelerating it.

Yes. You can run the same kind of Copilot search and have the same discussion with fully automatically scripted and AI-enriched ecosystem with your existing PowerBI reports or Tableau, or similar as well.